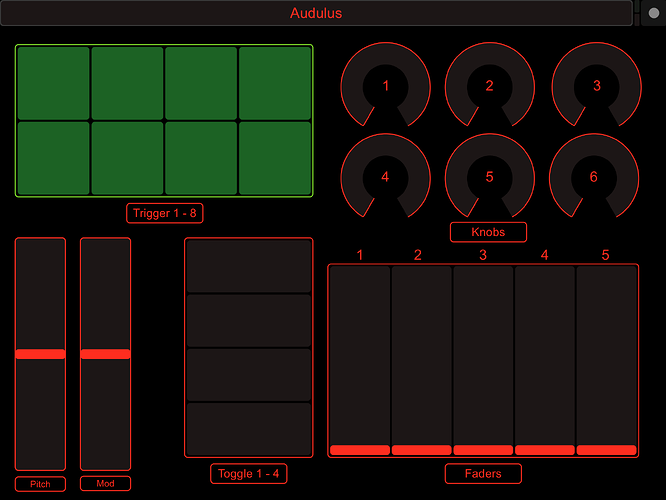

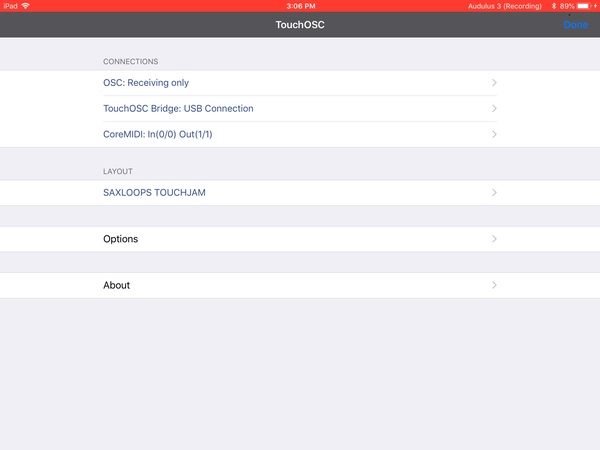

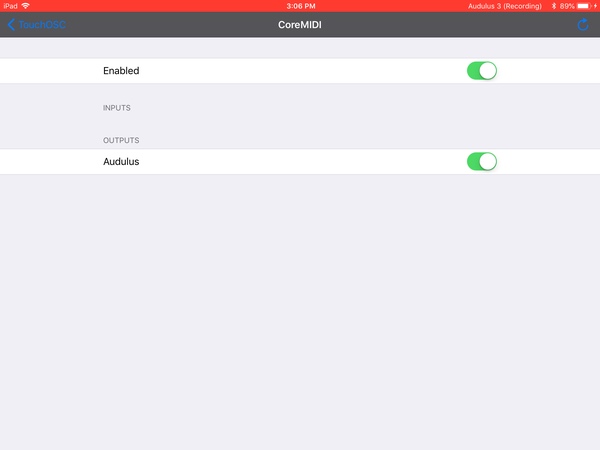

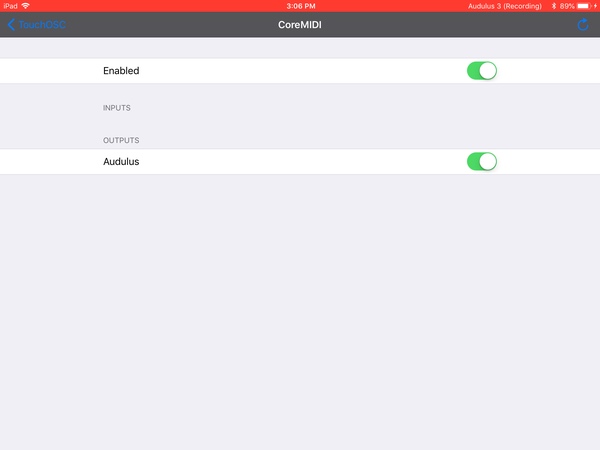

This is not, strictly speaking, hardware, but it seemed the best fit. I ported this over from the old Forum because @RobertSyrett recently acquired TouchOSC. For those of you that aren’t familiar with the app, TouchOSC is an iPad based MIDI and OSC control application. Although it is primarily used as a DAW remote, it is a quite capable MIDI controller. It occurred to me that it might be possible to use it as an alternative control surface for Audulus. I created a simple controller with 8 triggers (pads), 4 toggles, 6 rotary knobs, five vertical faders, a pitch bend and a modulation slider. I then created an Audulus patch with corresponding controls. I chose to make the toggles knobs rather than triggers in Audulus, although they are fully on or off. To use, install the attached Audulus 1.touchosc layout in touchOSC following the documentation. Use the controls in the attached Audulus patch as required to create your patch. To control Audulus on the iPad make sure that Background Audio is on in Audulus (wrench icon). Load your patch, then load TouchOSC. Select the Audulus 1 layout and make sure that CoreMIDI is enabled and the Audulus slider is on. Select done to display the layout. You now need to operate all of the MIDI controls (select the pads, turn up the knobs etc. This will send MIDI to Audulus for each control. Switch back to Audulus to allow Audulus to recognize the MIDI inputs. Audulus will not initially process the MIDI commands while it’s in the background (hopefully Taylor will fix this). You can then switch back to TouchOSC and you should be able to control your patch. Since TouchOSC is sending MIDI to Audulus, multitouch is fully supported. You will need to repeat operating all the controls and then switching to Audulus and back if you reload the patch or switch patches. You can also use TouchOSC to control Audulus on the Mac or PC using the iPad as a control surface. Touch OSC is available from the iOS App Store for $4.99. The editor will run on macOS, Windows or Linux and can be downloaded from Hexler.net I have attached the sample layout and patch as well as a demo showing a few of the controls. The pitch bend is connected, the mod slider is attached to the filter cutoff, trigger 1 is a conga, trigger 2 is a bell, toggle 1 turns the kick on and off, knob 1 is the clock frequency, and the sliders are mixer volumes for the oscillator, conga, bell, kick and overall output. For those interested, the triggers (pads) are mapped to C-8 up (MIDI notes 120-127) and the toggles are CC20-23, knobs are CC24-29 and faders are CC52-56. The pitch bend is mapped to the Pitch Bend message and the mod slider is mapped to CC1. Everything is set to MIDI Channel 8. This is the sample layout I built for Audulus. BTW, TouchOSC is rock solid controlling Audulus on the Mac from the iPad using the TouchOSC MIDI bridge available from Hexler. It also supports core MIDI as well as OSC.

TouchOSC Demo.zip (213.5 KB)

5 Likes

It might be useful to have an iOS MIDI & MPE category as there are many capable iOS controller apps including TB MIDI Stuff, Lemur, MIDI Designer 2 Pro, and MobMuPlat. For some of these apps you need a PC to design the surfaces and for others you can design the surface on your iOS device.

1 Like

TouchOSC has been on my app-purchase radar for quite some time and for some reason I have not snagged it yet. Fixing that today based on your post.

1 Like

I was just having a look at using an Audulus synth in Beatmaker 3 for iOS. Do I need to run TouchOSC in between the two in order to do parameter modulation on knobs in the DAW?

1 Like

You can connect directly to Audulus using the MIDI out from TouchOSC using the MIDI settings.

2 Likes

I wanted to use Audulus in Beatmaker 3 with the ability to draw automation of knob changes. From what you say here I take it that I need to use Touch OSC as a go between?

1 Like

You can control Audulus 3 with MIDI directly with TouchOSC or Beatmaker 3 or a combination of both.

1 Like

It would be so nice if touch OSC was an AUv3, because in Beatmaker 3, all the knobs are automatically available and can be assigned to macros. The idea of getting an Audulus sequencer going in a iOS DAW, then drawing some automations over time seems like a good idea to me.

1 Like

You have to distinguish between parameter automation and MIDI automation. Most DAWs can do both, but only parameters are reported to the host. An AU can define a set of parameters with can be manipulated by the host. For example a filter might have a resonance, cutoff freq. etc. The host (usually a DAW) can read these parameters and then manipulate them to change the behavior of the AU. Hosts can also provide a way to save a set of parameters as a “preset”. MIDI automation on the other hand involves the host sending a series of MIDI commands to the AU. Usually, for automation the commands sent are MIDI PC (program change) or CC (control change) messages. The message will cause the AU to change whatever parameter is associated with the message. The host, however, has no way to know which MIDI commands the AU will respond to or what they do. There are standard meanings for some messages, but mostly it’s up to the creator to decide which messages do what. The knobs you are seeing in Beatmaker are parameter automation and the actual display of the AU is handled by the AU itself. TouchOSC is a MIDI and OSC tool and doesn’t have a pre-defined set of parameters. I’m not real familiar with Beatmaker but most DAWS can record incoming MIDI and then play it back so, although you would have to switch screens, Beatmaker should be able to record adjustments you make using touchOSC and then replay them later. If it were an AU, TouchOSC could offer a display that could be seen within BeatMaker but it might make UI design in TouchOSC a bit more challenging. It’s possible but probably not too likely.

3 Likes

Just to add a bit more and reinforce what you’ve said. There are AU apps that send and receive MIDI. In some AU hosts apps (e,.g. AUM) you can route MIDI CC and notes to control the exposed AU parameters. The AU parameters are different than the standard note on/off, velocity, and modulation MIDI most AU synths and some AU effects have which don’t require setting up any specific routing the way that’s not much different from the user’s perspective than an IAA instrument port.

AU Presets

- AU apps also vary as to whether they can save presets that can be used in any AU host that uses them. (e.g. ReSlice)

- Some AU hosts have a way to share AU presets between devices or other users.

- Some AU host apps can save presets. (e.g. AUM)

- Some save the state but do not save them as an AU specific preset you can use in another setup. (e.g. Audiobus 3)

AU MIDI

- Not all AU apps expose MIDI AU parameters.

- Not all AU host apps access AU parameters.

- Not all AU apps have non- parameter MIDI control.

- Not all AU apps send and/or receive MIDI

- AU host apps have different was of doing MIDI control of AU parameters.

- Some AU host apps support NRPN controller protocols which offer better resolution than standard MIDI.

- Non-MIDI control of AU parameters (e.g. Beatmaker 3 AU parameter control via recording BM3 macro knob movements) has significantly better resolution than standard MIDI.

AU Screen Mode

- AU static size which doesn’t change when the AU host window resizes.

- AU Resizes when the host window resizes.

- AU full screen mode which can vary depending upon how much of the iOS device screen the host AU reserves for its own GUI versus the AU GUI.

- Not all AU host apps support all AU screen modes.

AU and AU host Built-In Keyboards

- AU app has a keyboard or not.

- AU host has a keyboard or not.

AU App Standalone

- Some AU apps only run within an AU host.

- Some AU developers have created a shell under which the AU app runs in its standalone mode (e.g. Zeeon).

- Some AU apps support other iOS music standards (IAA, Audiobus)

It’s my understanding from developers that many of the inconsistencies in AU implementations can be laid at the feet of Apple who didn’t follow through on developing or documenting AU standards adequately.

3 Likes

Good post. I’ve been perusing the Apple AUv3 docs myself recently and there are definitely some areas for improvement in the docs. You can find some detailed information from Apple regarding v2, but Apple doesn’t seem to be doing nearly as much when it come to documentation these days. This iOS a pretty good overview of AU in general but it’s pretty old. https://developer.apple.com/library/content/documentation/MusicAudio/Conceptual/AudioUnitProgrammingGuide/Introduction/Introduction.html

I was really trying to explain the difference between the parameters an AU exposes as part of the AU interface, and MIDI messages an AU or AU host might send or receive. There is provision in the AU spec for defining whether a given AU responds to MIDI commands, whether it is a MIDI instrument or effect and a mechanism for sending and receiving MIDI data from the host. There are a few common MIDI commands that are defined as parameters in the Apple docs. Things like all notes off, reset controllers, vol, pitch bend etc., but there is no requirement that a specific AU define these. Preset saving is a area where Apple and the developers are somewhat at odds.Ther are two types of presets. Factory presets are built in to the AU and are predefined. User presets are collections of settings created by the end user. Apple intends that hosts save user presets and provides mechanisms to simplify the process. Whether a host actually implements preset saving and how is up to the host. Many developers feel that the AU itself should save the presets, but the way the AU spec works makes this a difficult task. I expect that a lot of this will be ironed out as the v3 spec matures.

1 Like

I believe having presets saved in the AU app is the way to go so users don’t have to recreate presets in each AU app host or setup and being able to transfer them between devices or users is the best. Hopefully as the mobile market matures, Apple will invest more in developer support to remain competitive as a higher priced primarily proprietary oriented company. The focus will shift to new software functionality rather than new hardware features.

2 Likes