How squished are they? When I played the file the notes were within a 5th of each other most of the time, so 7 semitones, out of 88 (made up realistic seeming number), would be 1/10th the total height. Should they be normalized? I was going for pitch accuracy when I built the file.

Pretty cool!

Web MIDI has taken off and chrome browser or the Web MIDI app on iOS. Here’s a screen shot and video of iseqtribe for the iELECTRIBE app running in the Web MIDI app controlling it and a video of it too.

https://youtu.be/5RlacgVVWBg

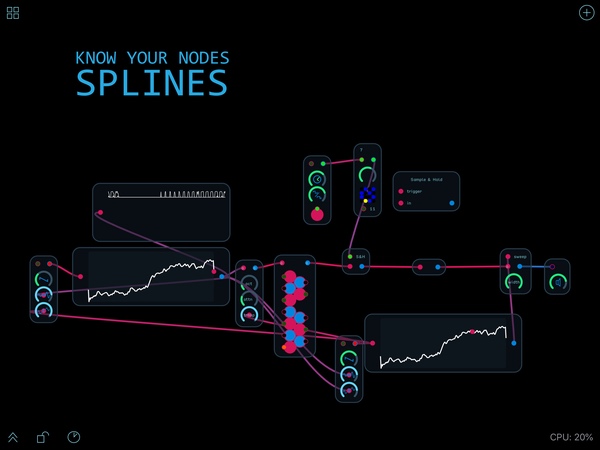

It might be useful to create splines that replicate the MIDI values themselves and then users could use the output from those MIDI splines to process it in ways they would with regular MIDI.

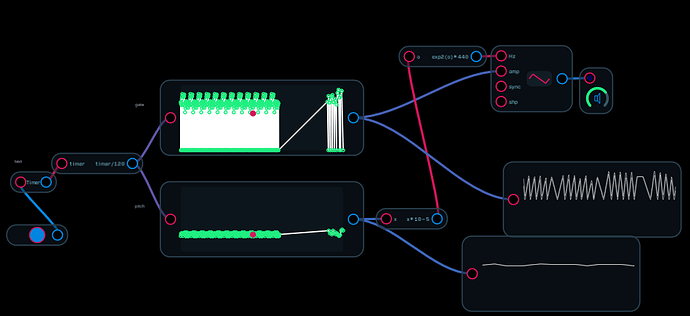

I have updated the build_audulus_wavetable_node tool to add MIDI support. It generates a gate spline (based on note on/off and velocity) and a pitch spline (MIDI notes scaled to 1/o, scaled down to the 0…1 range). The pitch spline includes an Expr node to move the output back to 1/o range.

Here is sample output: test.audulus (26.2 KB)

gem install build_audulus_wavetable_node

to update, and

build_audulus_wavetable_node -m somefile.mid

to use.

Wow this sounds crazy! So I think the algorithm is adding slopes in between the note points and gates. Is there a way to make the gates and notes more plateau-like?

Well, it’s really just adding the points, and the spline node’s default behavior is to add the slopes. I can force it to be more stepwise… just more work.  Or, as managers love to say, it’s a simple matter of programming.

Or, as managers love to say, it’s a simple matter of programming.

I guess a work around would be to remove velocity from the gate points and then you could sample and hold the 1/oct signal when the gate==1. Would that be less work?

ok that slipped past me, that is solid gold!

Not really less work. The correct solution is to add an extra point just before the transition to the next point so you get a microsecond transition between points. I’ll see if I can make that happen.

Here’s a video tutorial on creating .wav files to auto generate splines by sampling a few milliseconds of audio using AudioShare and TwistedWave on iOS followed by using the single cycle wave file as input for the @jjthrash program on macOS to generate the code per request by @robertsyrett.

Been quietly watching this develop – pretty much way over my head. At this point what is the chance of designing wavetables in WaveEdit and then running the tables through @jjthrash’s github project? Is the end result splines that are too CPU intensive for iOS?

I’ve already made a waveform in WaveEdit and run it through my tool. Works well! As for CPU intensiveness, the WaveEdit waveform only does 256 samples, so it’s not so bad. Worth a try, anyway.

There goes my evening. After dinner you will probably see me kicking aroung asking quesions about compiling your work

Are you familiar with GitHub? It’s the starting point. You need to clone the script @jjthrash created to your computer as the first step.

I have been avoiding Github, as every I try to compile something I end up spending a bunch of time troubleshooting a compile. I better wait till after dinner

I’d never used it before and was able to figure it out so let me know if you run into problems.

Here’s a modification of the original @robertsyrett patch along with a video.

spline CPU friend 6.audulus (287.6 KB)

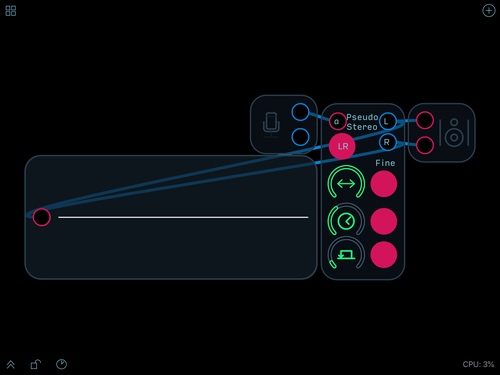

Here’s the PseudoStereo module used to convert the mono screen recording to stereo.

PseudoStereoIAA.audulus (11.7 KB)

That video is amazing! What program did you use to make the kaleidoscope?