Have you seen this yet? Official software versions of the NE modules o_0

I did! I don’t have Reason and haven’t used it for over 10 years probably, but this got me thinking about it again. They look like really solid rack extensions for it.

Why not a buchla 259e? or 291e ?

2nd request : Make Noise Phonogene and Erbe Verb

Thanks

week end suggestions

Mutable Rings

Mutable Clouds

Hey @jbaptiste64,

In case you missed it, @robertsyrett made a great module that would probably meet your Mutable Rings request here: uKarplus-Strong module

I’d love to see an Audulus clone of the Moog Model 15. To be fair, it’s a great iOS app on its own, but there are certain features I wouldn’t mind adding to it, like a couple more LFOs, a sequencer and maybe some more effects?

Why don’t you mess around with building a subtractive synth out of some of the modules and that moog filter? Or is that too obvious?

It WOULD be cool to have an all-in-one type module for that though! Just a neat thing to have. I assume we could make versions that were like each module by themselves and one where they’re all in a single patch but with patch points and looking relatively the same as the Model 15’s layout.

Why? Because it is a analog inspired digital drum module.

Why? Because it is a analog inspired digital drum module.

Also, because it seems as though most of this stuff has already been done around here. It would just need to be put into a tight little module. I think that because the Basmililus receives such favourable reviews, it would be in Audulus’ interest to flex its muscles and show that it could do it; let alone the fact that it could be a foundation to create something even more interesting based off of it. Myself, I was over the moon when I saw @stschoen’s Akemie’s Castle. There is just something exciting about a faithful simulation. That and the fact that you can watch youtube videos on the module in question and try some of the ideas out in Audulus, which helps to understand what is happening in the patches.

The Oscimillator patch I made started out as a BIA clone, but I couldn’t fine enough documentation regarding the math in the different modes.

Also, as is typical of digital modules, it has three modes. This means that you would probably need to massively complicate the inner workings of the Audulus module and drain CPU. I found that the multiple instances of the oscimillator was enough for me, but I suppose it might be a challenge for someone to take on.

It’s not a full take on Clouds, but Michael Hetrick ported the Oliverb algorithm to Reaktor Blocks, which (it I’m not mistaken) is the same reverb used in Clouds.

Maybe someone defter than I can port it from Reaktor to Audulus. Or maybe I will some day (after I learn DSP a little better)

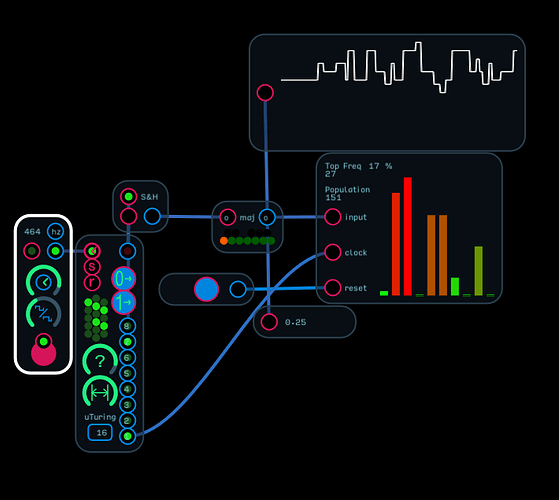

I’ve been playing around with Marbles since it came out on VCV Rack and it has a very interesting take on random melodies. You play it a bunch of notes from your sequence, like 50 notes. And it remembers the notes and their frequency of occurrence and constructs a scale. Then out of the quantized stepped output plays back notes from the scale randomly along a bell curve. You can bias the probability to one end or the other of the curve as to which notes will be played. Layered on top of this, the quantized step output has a length, like the Turing machine, and can be locked into a loop or have a probability of being changed.

Do you think such an approach would be viable with reset-able S&H nodes? I think this would be awesome to have in Audulus, even if you lose your scale when you close the patch.

Impressive module. At first glance it looks do-able in Audulus, although I suspect it would be rather CPU intensive. As a first step, I would probably build a stepped array of S&H nodes to capture a sequence. You would need at least one array for the random gates and three for the voltages. It might have to wait until we have some more efficient data storage.

decimal frequency capture of uTuring.audulus (306.0 KB)

The whole Marbles unit would definitely be overkill for Audulus, I think. But what makes me think the melodic learning might be possible is the Top Frequency of occurrence module @dcLargo made a while back. It could be stretched to 12 buckets as opposed to 10, but from there I’m unsure how to make a bell curve out of the data or biasing of said curve.

if you used 12 buckets (one for each note) you could certainly capture the number of samples for each. Not sure how you determine if a given scale is a major or the relative minor since they both contain then same notes. I’ll have to read the manual for Marbles. At this point I’m a bit unclear as to what the bell curve is supposed to apply to.

It seems like that’d sort itself out if you forced a key onto it. An algorhythm might assume the first note played is the tonic and then the others could fall into place around that. Does that make sense?

Which notes and how many notes in the scale? Based on frequency of occurrence, winners are those above some ranking, or more likely you choose from the top ranked on down until you reach some agreed-upon number of notes. If you hit 12, though, you’ve gone too far. Seven or eight seems a place to stop, although I prefer seven. Maybe using the log of the freq of occurrence* could help visualize the winners.

Still, calling the first note played the key, regardless the occurrence frequency, would be a reasonable start. It’s hard to say by frequency of occurrence alone what the key would be—apart from cowboy songs, of course!

*(skipping the log of zero, of course—mapping zero occurrences to the log of 1–zero, which is a proper ranking if the note occurs only once, and appropriately as one occurance statistically “next to nothing.”)

Using the first note would certainly work in many cases. You might also be able to determine the key by examining the frequency of notes. In most cases I would think the tonic, third and fifth would probably occur the most often. Since these would be different for the major and relative minor, you might be able to separate them that way.